Chris on Software Testing

A blog by Chris Neville-Smith.

-

Closed App Store or open Android Market? Both, please.

Apple and Google are at war over whose system of accepting apps is better. Here's why they should offer both.

There is little doubt that one of the biggest changes in technology over the last ten years is the adoption of the smartphone. And well as changing the habits of mobile phone users, it's meant a lot of changes to computers in general. Not all have been good - it has propagated some ridiculous patent lawsuits, and it's encourages the rise of some highly dubious "freemium" games - but one of the best things it's brought, in my opinion, in my opinion, is the concept of the app store.

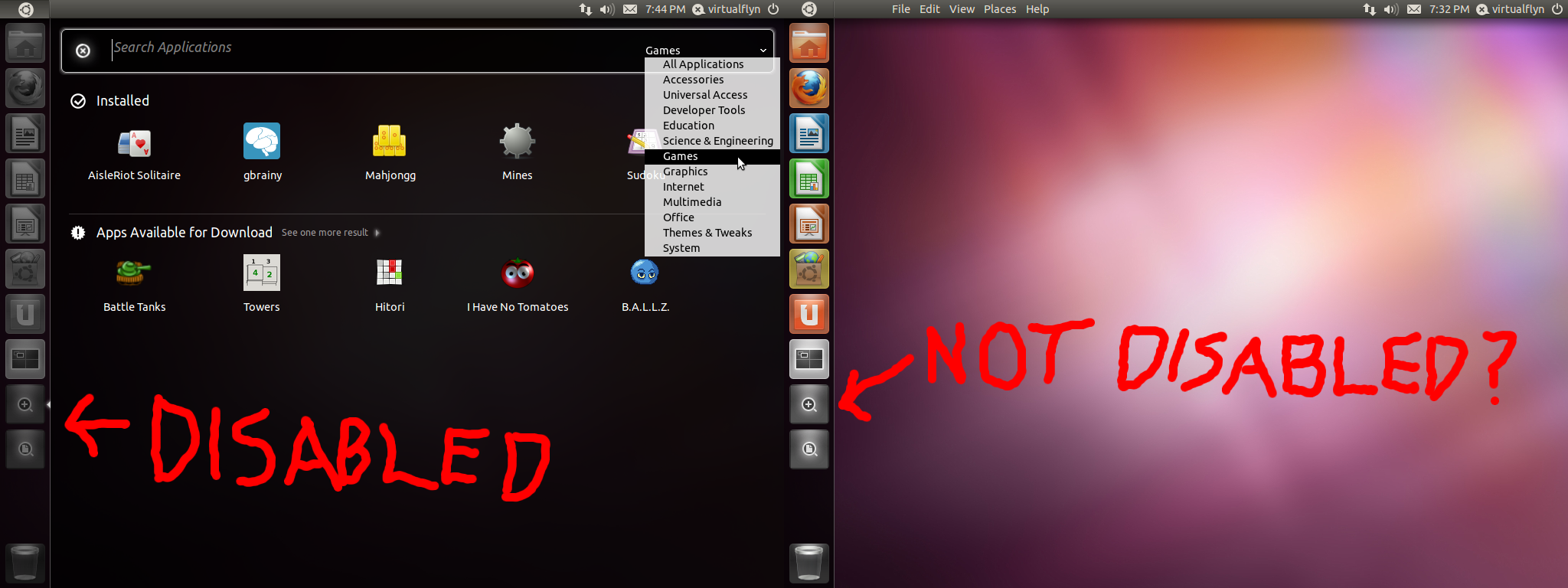

In the Linux world, the idea of the app store is old hat. For decades, most Linux distros have been orgnaised into packages. Some are integral to the system, such as the kernel and desktop, some are standard packages such as Libreoffice, and some are extra packages that users add to their system. To add an extra packages, you simply go to the Add/Remove programme, click on what you want, and Linux downloads and installs it for you. There are a lot of advantages to this method: it automatically installs any other software you need to run this program, everything is automatically updated, and if you ever want to install the program, Linux does it for you rather than relying on a dubious uninstallation package that came with the program. Although most software installed this way is free, it has been used for paid apps too.

So, in theory, it is welcome that this practice has been adopted on smartphones. In practice, however, things are more complicated. There are two big changes between Linux and smartphones. Firstly, it's opened this approach up from a mainly tech-savy small group to the masses of smartphone owners. Secondly, this method of installing software has suddenly become a lucrative way of earning money. As a result, there are now thousands of app writers all jostling for status in a highly competitive market. And this is where Apple and Google have heavily differed in their answer to this challenge.

Apple's solution has been to vet apps through its app store - a major derivation from most Linux distros that broadly welcomed anything. Needless to say, this has been controversial, and it would be easy to write a whole article bashing Apple for this. Firstly there's the obvious argument of whether Apple should be dictating to iPhone users what apps you can and can't buy. There have been some dubious decisions to ban apps that come across as censorship of opinions, such as Phone Story. Apple has been ridiculed for the apparently arbitrary way that apps get accepted or rejected. So far, so bad.

In Apple's defence, however, I'm not sure it's the sinister evil Apple conspiracy people have suggested. Microsoft took a similar to approach to Apple for Windows 8 apps. I read through those guidelines for a previous piece of work, and I can tell you that there's nothing particularly unreasonable. Nonetheless, the outcome was a mess, with stories of perfectly legitimate apps getting rejected for strange reasons. I suspect the root problem is that vetting policies, no matter how well-intentioned they must be, are in practice a nightmare to implement.

Apple, however, argue that their vetting procedure ensures that users can be assured of quality and security in their apps. The claim of quality is again dubious, because Apple gets criticised for apps making it through the criteria not being stable. But on the issue of security, they've got a point, and this is where Google's Android market comes into play.

Smartphone, being essentially another kind of computer, share the same security principles as normal computers, but there are a number of differences. Potentially, the spoils of a hacked smartphone outweigh those of a hacked computer. You can plunder address books, sell on details of the holder's personal movements, and make money by getting the phone to dial premium rate numbers. Luckily, Android and Apple have both proved themselves to be quite resilient to ne'er-do-wells - there's certainly no sign of a return to the bad old days where your Windows XP computer could catch all sorts of nasty viruses just because you visited the wrong site with IE6 and ActiveX.

But the chink in the armour is apps. No matter how secure an operating system is, a rouge app you willingly install is free to inflict all sorts of bad things - and there is little Android/OSX/Windows can do to protect you. Is that programme you just installed accessing your address book for legitimate reasons or is it sending it on to identity thieves? Is the programme making calls meant to do that or is it trying to sting you with premium rate numbers? There's no easy way for the phone to know. And whilst this could be a threat to any operating system, it's Android that gets targeted time and time again.

It's a serious problem. In the old days, it was easy to blame users for downloading a dubious virus-ridden program they found on the internet, but in the days of app stores where legitimate programs and virusware both come from the same place, how do you tell which is which? Most people cannot reasonably be expected to have background knowledge of the latest app scams out there. In theory, whenever you download an app you are presented with a list of actions your new app is and isn't allowed to do, but it's so confusing to layman the default response is to say yes to everything.

So, yes, iPhone has one over Android here. They can claim that the only way you can be sure of being protected from rogue apps is a properly vetted App Store. And the only way you can have a properly vetted App store is with an iPhone. But that means buying into Apple's idea of what you can and can't do with a smartphone. It's a high price to pay, and it's a choice people shouldn't have to make.

So, here is my proposed solution to all at Microsoft, Apple and Android: stop arguing about whether it's better to have an open or close app store, and instead offer both. Vetted store or open store, let the users take their pick.

So, how would this work, and why would it differ from what Android does now? Well, the way I think this should be done is for smartphones, by default, to take apps from a vetted store. Exactly how fussy they want to be over software quality or adherence to standards is up to them, but the important one is security. Someone answerable to Apple/Android/Windows has to have a look at the App to see if it's doing something it's not supposed to. But the power to opt out remains with the users. If they want to switch to an open store, by all means display a message explaining the risks of unvetted applications, but if the user selects "Yes" to "Are you sure", that's the user's choice.

This, I think, is a good balance. People who aren't interested in a choice of a gazillion apps out aren't going to be bothered with a limited range on offer from a vetted app store. That saves them the problem of picking the reputable weather app from the dodgy one, and saves the hassle of understanding the confusing security permissions messages. People who want a wider choice, who understand the risks of unvetted third-party apps, are free to do their own thing, as is anyone who finds the rules of the vetted store too restrictive for their liking.

I also believe it would do Apple (and Microsoft) some good to offer this choice. If few people choose to opt out of your vetted app store: great, you've got the confidence of your customers. If customers opt out in droves, that is a warning sign that you're doing something wrong, but also an opportunity to identify the problem and put things right. Surely that has the be better than people hating your vetting policy but being forced to stick with it. The advantage to Android from this policy, of course, is offering customers worried about spiked apps a safe option and peace of mind.

Wow, a blog article that picks out good points of both Android and iPhones. Is that allowed? var _paq = _paq || []; _paq.push(["trackPageView"]); _paq.push(["enableLinkTracking"]); (function() { var u=(("https:" == document.location.protocol) ? "https" : "http") + "://chrisnevillesmith.me.uk/piwik_test/piwik/"; _paq.push(["setTrackerUrl", u+"piwik.php"]); _paq.push(["setSiteId", "1"]); var d=document, g=d.createElement("script"), s=d.getElementsByTagName("script")[0]; g.type="text/javascript"; g.defer=true; g.async=true; g.src=u+"piwik.js"; s.parentNode.insertBefore(g,s); })(); -

The big bang theory

If you look beyond the political point-scoring over the latest debacle on Universal Credit, the real lesson is that the "big bang" approach to IT projects rarely pays off.

A completely inaccuarate depiction of the Big Bang.

Also not a good approach to most software projects.Well, I hate to say I told you so, but... I told you so. Just under a year ago, I idly speculated that the next big story about an IT cock-up might be the upcoming Universal Credits system. I won't go over the whole thing in detail, but it boiled down to two concerns: firstly, I was sceptical over whether the intended launch of October 2013 was realistic; and secondly, I know from my experience of ID cards that there is a culture in the civil service of making promises that cannot be delivered. And what do I find yesterday? Oh dear, oh dear, oh dear.Now, before I jump on any bandwagons, it's helpful to put this in a bit of context. Firstly, the National Audit Office is notorious for nit-picking (as is the Public Accounts Committee), and their supposedly damning reports are often little more than minor points blown out of proportion by the press. Secondly, benefit reform is a hugely controversial issue and a lot of criticism (and defence) of this IT project will be down to ideological stance on benefits rather than whether the product does the job. (For the record, I think the principles of Universal Credit - that work should always pay and simplification of a bloated complex system - are a good idea, but there's valid points over using the reform as a smokescreen for cuts.) Nevertheless, it looks like there's more to this one than political hype. The October launch is now just six pilot sites, which is a common Civil Service method of back-pedalling in a way they can claim they "met" the deadline.So what's gone wrong? There is a good summary of reported mistakes on BBC news, and the thing that struck me the most about this is how similar these mistakes are to the mistakes made with ID cards. Comparing what's happening now to what happened with ID cards, I can tell you the following:

- The over-ambitious timetable: Oh dear, I'd really hoped that the civil service might have learnt their lesson here. I remember the first thing that set alarm bells ringing was my first look at the development timetable. Even with the promises that you could easily adapt an existing piece of software (they thought a program designed to issue security passes for buildings would do the job), I could tell straight away the schedule was unrealistic. You need to allow at least one month of testing and stabilisation for every month of programming - this project tried to six six months' worth of work in three months.

The new passport issuing system has fared little better - what was supposed to roll out in 2010 is only just being rolled out now. They never seem to learn this lesson.

- There was no detailed plan: Now, for once, I can't accuse civil servants of repeating an old mistake. ID cards was based on the V-model method of software development, where requirements, system design and component design were done in order, and testing starting with components and working up to a whole system. This was a reasonable approach, but - as often is the fate of V-model-based projects - there were a lot of oversights in design that resulted in too many disruptive last-minute changes.

This project, however, seems to have made the opposite mistake. It is reported that this project used the Agile Model, where you start off with creating and testing a prototype, and through many iterations add in extra features, developing the design go along. The Agile method can work if you have a suitably flexible project. This was not. There was a fixed deadline, a big undertaking, and complex contracts awarded to suppliers. The plans for ID cards were probably too rigid, but it seems we've gone from one extreme to the other.

- A bunker mentality developed: This one doesn't surprise me at all. One of the things that shocked me the most about the ID cards projects was the amount of toadying that went on. I sat in a training session where people tried to out-do each other on how enthusiastic they were about ID cards, including - I am not making this up - a helpful suggestion for how to encourage take-up, which was to only give benefits to people with ID cards as if this would suddenly make the scheme popular. It was also taken as gospel truth that once ID cards were introduced, no future government could reverse it. (Um, yes they can, and yes they did.)

Any attempt I made to highlight the mildest of concerns about the state of the IT systems got nowhere, so it's no surprise if DWP underlings with constructive concerns fared little better.

- Poor financial management: I didn't see much of the financial side so I can't make much comment about this. What I do know is that there was a massive discrepancy in pay between permanent staff and interims brought in from outside, which created a lot of resentment within the organisation. To be fair, the civil service has made a lot of progress cutting down on expensive consultants when they're not needed, but evidently that didn't happen with Universal Credit.

- High staff turnover: Unclear what the exact cause of this was in DWP's case, but a common effect of badly-managed IT projects is that everyone wants to get out. This is especially common when staff are expected to have their lives outside of work put on hold in order to work round increasingly unreasonable demands, as happened with ID cards. To be fair, I believe the people at the top of the project genuinely tried to maintain a work-life balance, but there were too many people down the management chain who ignored this.

- Inadequate control over suppliers: I will be fair to the ID cards management here: managing the relationship between government department and contractor is difficult. You need clearly defined areas of responsibility so that everyone knows who is responsible for what, but you also want to avoid fiddly micromanagement going on between the two. The management of the ID cards project were perfectly aware of this challenge.

But for all of their efforts to get this right, something went badly wrong.. I did a large part of the testing at the offices of the developers, and it wasn't long before I heard developers openly making derogatory remarks about individual senior civil servants and interims when I was clearly in earshot. The relationship between the programmers and the testers on the ground was all right, thank goodness, and we managed to ignore the squabbling and work together, but I dread to think what was going on upstairs.

- Ignoring recommendations: This one I can't comment on, because I have no idea if any recommendations were made to the ID cards project, let alone whether they were acted on - those sorts of things were kept out of view. What I do know is that, as well as the futility of anyone on the inside trying to give advice, successive layers of management were seemingly oblivious to the increasing notoriety of the scheme outside the bubble. Doesn't bode well for listening, and it seems that the bunker mentality has struck again.

In defence of the DWP, the project managers were probably unaware of all the mistakes made in the ID Cards project. This is because we never got to hear about it. ID cards were binned on political grounds by a change of government, so the problems with the IT system never came to public attention. This time, however, there's no way you can fail to notice what's gone wrong. Some serious lessons need learning here before yet another IT project is embarked on with silly timescales.But there is one other other issue here, one that I think is more important than all of the above. I think the original mistake was to embark on an IT project of this scale in the first place. This is what I call a "Big Bang" project, where a date is set in the future where everything switches at once: IT systems, rules, maybe even working practices. Big Bang projects are extremely risky because if they go wrong, they can go massively wrong, in this case threatening to derail a flagship government policy. Sometimes a Big Bang approach is unavoidable; ID cards, for example, were a completely new thing that required the development of a completely new system. Even so, they had the safeguard of not needing to switch over millions of existing records.I cannot understand why it was necessary to make the Universal Credits IT project so complicated. When one purpose of the project is to simplify the benefits system, one would have thought the easiest approach would be to adapt the existing systems to work with the new rules. I'd have thought it would have been reasonably easy to make the existing system for Jobseekers' Allowance (which already considers means-testing such as income as savings) also handle Universal Credits claims. Housing benefit and other benefits that are replaced with Universal credit could then be set to zero, hopefully preserving the interface between the systems. The DWP system will need replacing eventually, as all systems do. But it's better to do that as a project in its own right, where you're free to do it over a sensible timescale without deadlines for government policy getting in the way.It is not clear whether this debacle was down to a government minister imposing this silly timescale on civil servants, or civil servants choosing this silly timescale and telling government ministers it was achievable. That is not important. Well, it is important if you're more interested in scoring political points one way or the other, but that is what happens after every IT cock-up and lessons don't get learned. Neither will lessons be learned if civil servants and government ministers blame each other. If things are ever to change, ministers and mandarins alike must appreciate a simple rule: the big bang approach rarely pays off in IT.var _paq = _paq || []; _paq.push(["trackPageView"]); _paq.push(["enableLinkTracking"]); (function() { var u=(("https:" == document.location.protocol) ? "https" : "http") + "://chrisnevillesmith.me.uk/piwik_test/piwik/"; _paq.push(["setTrackerUrl", u+"piwik.php"]); _paq.push(["setSiteId", "4"]); var d=document, g=d.createElement("script"), s=d.getElementsByTagName("script")[0]; g.type="text/javascript"; g.defer=true; g.async=true; g.src=u+"piwik.js"; s.parentNode.insertBefore(g,s); })(); - The over-ambitious timetable: Oh dear, I'd really hoped that the civil service might have learnt their lesson here. I remember the first thing that set alarm bells ringing was my first look at the development timetable. Even with the promises that you could easily adapt an existing piece of software (they thought a program designed to issue security passes for buildings would do the job), I could tell straight away the schedule was unrealistic. You need to allow at least one month of testing and stabilisation for every month of programming - this project tried to six six months' worth of work in three months.

-

Bring on the naked laptops

If we’re serious about using technology to empower users, people should have the choice to buy a laptop, tablet or smartphone without the software.

This is my new laptop. Observant readers will notice that this is a Chromebook running Ubuntu on it. As Linux fans know, Ubuntu and most other Linux distributions can be legally downloaded for free and installed on any computer. The only question is what computer you choose. For me, a Chromebook seemed like a good bet: they are cheap low-spec laptops, probably incapable of running Windows 7, but Ubuntu is a resource-light operating system and I use my desktop for anything resource-intensive. Chrome OS is heavily geared towards users of Google services, like GMail and Google Docs, but I’m installing my own software so that doesn’t matter. So, let’s buy a Chromebook and install Ubuntu. Simple, huh? Simple?

Hah, I wish! You have no idea how much blood, sweat and tears I’ve been through to get to what you can see in that photo. It all boils down to this thing on Chromebooks called secure boot (aka verified boot). Oh boy. This is something that, in theory, is meant to protect you from hackers up to no good – I have used the words “in theory” for a reason, but I’ll come back to that later. As far as Chromebooks are concerned, there is a way of switching off secure boot by going into “developer mode” (which isn’t advertised widely, but if the intention is to prevent people fiddling with settings who don’t know what they’re doing, that’s fair enough). Unfortunately, even in this mode, you still can’t boot from a CD/USB drive, which is the normal way of installing an operating system. Never mind, there’s an Ubuntu derivative out there called Chrubuntu, specially designed to be downloaded and installed from a command prompt in Chrome OS. Okay, that doesn’t sound too bad.

So, how did it do? Well, firstly I discovered that the way you enter developer mode on a Samsung Chromebook is different from other Chromebooks. Then I made several failed attempts to install Chrubuntu and eventually realised that the installation script I was using doesn’t work for my particular model of Samsung Chromebook, and in fact needed a different installation script. Once it was installed, I had another nightmare getting Libreoffice installed, which was down to the Ubuntu UK mirror not being able to download software with ARM architecture from uk.archive.ubuntu.com (you have to use gb.archive.ubuntu.com). There’s still other issues to fix, but currently Chrubuntu is only for open-source masochists. Yes, nothing beats the thrill of getting your new laptop to work, amidst fears you may have spent £230 on an oversized paperweight, but this is not an experience I’d recommend for Joe Public.

Why is this so frustrating? Because it doesn’t have to be this complicated. If you want Ubuntu or any other operating system on a desktop computer, you simply buy a computer with a blank hard disc, insert the installation CD or USB stick, boot up, and away you go. Laptops are a different story. It is near-impossible to buy a laptop without a pre-installed operating system on it, paid for by you. And even if you think you’ve managed to buy a laptop without Windows, you may still end up paying for it owing to this ridiculous arrangement where laptop manufactures have to pay Microsoft for one Windows license per machine whether or not it gets installed. But most Linux users choose to bite the bullet, pay a £40-ish premium for a product they didn’t ask for, and forget about it.

Now even this is getting worse. Microsoft have also been joining in with secure boot, but unlike Google, there’s not always an opt-out – and that would have included my ARM-based laptop had it been pre-installed with Windows 8 instead of Chrome OS. Microsoft argues that secure boot is necessary to protect you from rootkits, but is this really a proportionate response to the threat? The suspicion is that the real threat that concerns Microsoft is the threat of pesky users running operating systems Microsoft doesn’t want you to use. This may sound paranoid, but as Microsoft have previously attempted to stop “naked” desktop computers being sold (those 5% or so sold without pre-installed operating systems), and they were slow to announce that some (not all) kinds of computers would be allowed to opt out of secure boot, I struggle to find any more charitable explanations.

Tablet and smartphones are little better. These devices are, for all intents and purposes, another kind of computer. The only difference is that they have touchscreens instead of keyboards. Therefore, one would have thought you should be able to insert a USB stick and install whatever you like. Instead, installing another operating system on an Android device is about as complicated as my experience with a Chromebook. And this is at the mercy of Google voluntarily providing a mechanism to opt out of Android on a smartphone. They might withdraw this is a future update, just like Sony did on the Playstation. As for anyone who bought an ARM-based Windows 8 tablet – forget it.

Now, I don’t believe in leaping on the “Google good, Microsoft bad” bandwagon – there are plenty of questionable practices where Google has a case to answer. But on this very important issue of consumer choice, Microsoft is clearly the worst offender here. Google hasn’t been terribly helpful to users wanting to customise Android devices or Chromebooks as they see fit, but they have stopped short of outright blocking it. Microsoft, however, are actively preventing it (as does Apple, although I doubt many people would want to spend a fortune on an iPad if the first thing you’re going to do is scrub the disk), and that’s something I fundamentally disagree with on principle. If you’ve bought a computer with your own money, it’s nobody else’s business how you choose to use it.

But even the opt-outs offered by Google aren’t good enough. If we are serious about computers empowering users, users should have the option of buying any kind of computer – desktop, laptop or tablet – without the software. As this is possible for desktops, it’s no excuse to claim this is too difficult, and certainly no excuse for laptops which are functionally identical to their larger cousins. The current grand scheme to empower customers in Europe is the “brower choice” screen in Windows; I personally think that is a waste of time, because the choice of browsers was already there, just made a little more obvious. Instead, we need to look at where users don’t get a choice. It’s bad, it’s getting worse, and the availability of naked laptops would do a lot of good. -

Time to wise up to Freemium

The recent case of a £1,700 Zombies vs Ninja bill should be a wake-up call for how ruthlessly children are being used as cash cows.

“That will be £699.99, please.”

For all the criticisms I have of Apple, one of the things they got right was the App store. They weren’t first people to use this model (Linux distros had already used this approach for years), but they did pioneer mainstream adoption. This has brought a lot of benefits: software installed through repositories such as App Stores easily remains up to date, you don’t have to search on the internet to find the program you’re after (and therefore little danger of accidentally installing a spiked programmasquerading as the one you’re after), and it’s easy to remove anything you don’t like (as opposed to hoping the program came with a working uninstall mechanism). It’s also opened up the market on paid apps beyond the big players, and pushed down prices; no more will we be forking out £29.99 for very basic games. On the whole this has been a major step forwards.

Not everything about it has been welcomed. There are quite a few iffy questions about Apple and Windows 8’s over-zealous vetting policies, which I’ve discussed before. But lately I’ve seen a new breed of programs coming to App stores which I think needs questioning. These are known as “Freemium”, and these apps, usually games, are free to download. But if you want to advance in the game, you have to pay real money to receive in-game power-ups. Let’s make this clear: it is nothing like the old model of a free demo version or a paid full-version – they make their money from customers who pay for upgrades again, and again, and again. Freemium advocates might argue that if you want to be a football champion, you have to spend money on a decent kit and training, but I don’t agree. This is cyber-land, where “training” and “kit” is merely changing a few ones and zeros in your favour, and unlike real training and kit this costs nothing to make. I would rather liken this to an owner of a cricket pitch charging you extra for bowling overarm.

To be fair to the Freemium companies, this isn’t entirely a new thing. The practice of gamers paying real money to better themselves in imaginary games has been going on for years without their help. For many years, people have willingly paid real money for virtual gold in games such as Warcraft on a virtual black market, in spite of the game owners trying their best to stop it. There are other also practices taken to such a ridiculous extent that a Chinese player even ending up killing someone for real over sale of an online sword. It was perhaps inevitable that someone would realise that there was a whole market for paying real money to win an imaginary game. I want nothing to do with this – this seems to me like buying a gold medal and thinking this makes you an Olympic champion – but is it really my business? No-one’s being forced to pay for this, so why force someone not to pay?Good question. I have frequently berated Microsoft and Apple for depriving their customers of freedom of choice, especially in their app markets. I have never accepted the argument that customers need protecting from apps deemed to be inferior quality to the alternatives, so it’s not easy to suddenly claim we need to protect customers from unethical methods of payment. Surely we can decide for ourselves if we’re all adults?The problem is, we’re not all adults. I’ve noticed that Freemium games are increasingly being aimed at children – and young ones at that. Young children, with no concept of financial responsibility, are the easiest targets for tat whose retail price vastly outweighs the design and production cost. Ruthless marketing pre-dates apps - remember the controversy over Pokémon Red and Pokémon Blue? - but it also predates computer games completely. My Little Ponydidn't need computers to churn out endless ranges of new ponies, and woe betide any parent who says no. The reason I am picking on My Little Pony is that this greed has blatantly gone straight into their latest app, where you have to pay as much as £35 to unlock new virtual ponies. Smurf’s Village has also come under heavy criticism for racking up large bills, and recently a 5-year-old child racked up a £1,700 bill with the blatantly child-aimed Zombies vs Ninja. Not all Freemium games are aimed at children, but increasingly it’s the kiddie games that are behaving the worst.The predictable response is to scorn parents for not having control of their children. I think that’s a poor excuse, no better than a supermarket blaming parents for tantrums over sweets they deliberately placed at the checkout. With the shameless targeting of children combined with the extortionate amounts Freemium games try to bleed off customers, and the fact that the contracts used mobile operators make it difficult to keep track of that you’re spending, we’ve got a very serious problem on our hands. Probably the most unkind but telling analogy I’ve heard is the business model of the cocaine dealer: the first hit is always free.I don’t want Freemium banned. Like other consumer products pushed at children, creating new rules rarely solves the problem – they are too easy to get round. There is a case for pressing Apple and Google to be clearer about in-game purchases – as it stands, some iPad apps mention this as an optional courtesy, and one must consider Apple’s priorities when you consider how restrictive their vetting procedure is. What we do need is for the public to rise up as one and stop appeasing these tactics. With many freemium apps costing five times what you'd have paid for something similar in a shop, it's only a matter of time before people start wising up. And this may happen sooner than you think, because the mood is already turning ugly.Done right, Freemium could still work as a business model. If they carry on taking users for granted the way they do now, it could be the next Instagram. And, unlike Instagram, it won't be missed. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

Who needs 1984 when we’ve got Foursquare?

Online snooping is getting worrying – but if we want to stop this, we must ask some fundamental questions about social media.

The next poster in the series says "Facebook is privacy"

When George Orwell created Nineteen Eighty-Four and Big Brother in 1948, he could scarcely have imagined the future. Not so much the nightmarish vision of the Ministry of Truth, Ministry of Plenty, Ministry of Peace and Ministry of Love, but two things he would never have guessed. Firstly, the emergence of god-awful reality TV show Big Brother (and all the other god-awful reality TV programmes it spawned), and secondly, a load of persecution complex-ridden Middle Englanders who says “It’s just like 1984” every time they get a speeding fine. I suppose some bits bear resemblance to the book, but that tends to be things like petty council officials invoking anti-terrorist laws over littering. All in all, it’s a bit of a damp squib.

But fear not, Mr. Orwell, all is not lost. Recently we have seen the arrival of a new program called RIOT (Rapid Information Overlay Technology). This little device uses information from social networks to track the movements of individual people. It is suggested this could be used as ways of monitoring people who are about to commit a crime – cue analogies to Precrime in Minority Report – but just like its ficticious counterpart, there are serious questions of how reliable this would actually be. Certainly there’s not much enthusiasm from the Police. Which makes me think the key market might be employers. Like a retail manager who wants to know if his staff are shopping at competitors. Or a civil servant checking which pesky underlings attend opposition party meetings in the run-up to an election. This could be fantastic news – if you are a control freak with lots of money and power.

There is just one small but crucial difference to what Orwell had in mind. The subjects of Oceania were forced to be monitored day and night in everything they do, through cameras, curfews and spies. RIOT, on the other hand, runs entirely off information that its unwitting subjects quite happily stuck in the public domain. Love your Facebook status updates? Can’t live without your Tweets? So does RIOT. All this information about where you are and what you’re doing is most useful, thank you very much citizen. Better still, why not take information from Foursquare, a service that makes it trendy to reveal your location as often as possible. Who needs “Big Brother is Watching You” when you can say “Hey there, are you going to put all your private information online like the COOL KIDS do, or are you a LOSER?”

This is not the first time someone was written an online snooping program that uses publicly-accessible information. Previous examples include “Please Rob Me”, to inform you, me, and any local burglars which houses are empty, and sex pest-bonaza “Girls Around Me” showing you the location and physical appearance of females nearby.[1] I should point out that these programs were both written to prove a point – albeit in a highly irresponsible way – but that’s little consolation for anyone affected by this. The Inner Party must be kicking themselves they never thought of this.

Now, as someone with no Facebook, Twitter or Foursquare account, it would be easy of me to scoff and tell everyone affected that they brought it on themselves. But the reality, I think, isn’t quite so simple. This is an issue that I think can only be addressed with some fundamental far-reaching questions about social media.

The problem is that, for many people, social media is now effectively compulsory I have lost count of the number of people who say they’ll Facebook me, as if this is the only way you communicated with people nowadays. (I mean, haven’t these people heard of e-mail?) I personally think that friends who won’t stay in contact if you’re not on Facebook aren’t worth having as friends, but I have a choice of friends who aren’t so obtuse. Other people don’t. This is especially a problem amongst teenagers where invitations to parties and the like are now exclusively given through Facebook – and habits made in teenage years can persist for a long time. And that’s just individuals. If you’re a business, or you’re self-employed, woe betide you if you’re not signed up to Facebook, Twitter, Linkedin and mysociallifesbetterthanyourssothere.com.

Once you’re signed up, social media sites have a very poor record for privacy. Oh, they’ve got an excellent record in producing privacy policies – it’s just that the typical privacy policy roughly says you don’t have any. The reason I left Facebook (apart from endlessly getting contacted by people I was quite happy to have lost contact with) is that I got sick of all the times the site pestered me to add more and more personal information about myself. Facebook’s claim to privacy lost any credibility when they started sharing information with friends’ friends without asking you. Bear in mind that at least one of your Facebook friends is probably trying to break with world record for most Facebook “friends” they don’t even know; so this makes Facebook about as private as announcing your next relationship breakdown with a skywriting plane. I know there’s all sorts of opt-outs available in social media, but the combination of apathy and confusing configuration settings renders this largely ineffective. As for safeguards against combining information from different social networking sites to form a highly intrusive profile of you – forget it.

Normally I would argue that privately-owned companies should be able to do what they like. But the very nature of social networks makes sites such as Facebook and Foursquare virtual monopolies. And as private monopolies, they have a lot of power but very little responsibility. Foursquare cannot credibly blame third-party apps for using public information they’ve collected, neither can Facebook credibly blame its users for handing over private data they encouraged them to reveal in the first place. We need a serious debate about where social media stands in an increasingly lawless privacy-disregarding internet. For what it’s worth, I think social media should, at the very least, operate information sharing on a strict opt-in basis. And if any users wish to share their information at all, they should make it absolutely clear what this means and what the risks are. I don’t know exactly how this should be done, but this push to make users share more and more private information online isn’t doing any good.

If the big social media sites won’t budge, the only other hope is a culture change from the users. Strange as this may seem to some people, until a few years ago the world functioned perfectly well without Facebook. Social media itself is undoubtedly here to stay, but do we really have to keep the whole world informed of every aspect of our wildly trumped-up social lives? Not all techno-crazes stick around – few people today want the latest Jamster ringtone (thank God). It would, I think, be better if this fashion for sharing all your information online became a passing fad – maybe with a return to old-fashioned offline boasting. If this sounds too difficult, just think what we could achieve. When the Establishment creates the Ministry of Online Privacy, we’ll know they’re rattled.

[1] In Foursquare’s defence, it’s only fair to point out they did block access to Girls Around Me as soon as they found out about it. However, all this really proves is that next time you want to use Foursquare for snooping or stalking, you just make sure they don’t know what you’re up to. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

A harsh lesson for Facebook

As expectations for a free internet increase, more novel ways have to be found to make money. Instagram is a prime example of how not to do it.

“Hello John. You only did six Facebook Status Updates yesterday. Why don’t you buy

the new iThing plus max supreme, with new Facebook infinity plugin included?”

There’s a famous scene from the Stephen Spielberg classic Minority Report depicting a possible future of advertising. In the film, whenever our hero John Anderton enters shopping centre, the nearest advertising billboard scans his irises and says “You, John Anderton, need a holiday / designer jacket / ticket to the Superbowl.” (And when he gets a new pair of eyes on the black market, the adverts change to “You, Mr. Yakamoto, need a holiday / designer jacket / ticket to the Superbowl.”)

Like most science fiction films, it sought to portray an uncomfortable vision of the future, in this case one with scant disregard for civil rights or privacy. However, it appears that the advertising industry completely missed the point and thought Mr. Spielberg was portraying a rosy future where hard-working businesses can sell more products to consumers through a “relevant adverting experience”. At least, this would explain the logic behind those internet adverts of “57-year-old [Insert location you are accessing internet from] Mom looks 27 – click here to discover her secret”. It would also explain why, when you look at one website, the adverts of that product keep following you to other sites – an action I find comparable to sales reps from Boots following you into Debenhams and Costa to pester you into buying the shampoo you were vaguely browsing.

They haven’t quite reached the technology needed to do full Minority Report -style advertising, but recently a photo-sharing site decided it would join in the fun. Yes, following its recent acquisition by Facebook, Instagram helpfully informed its customers, somewhere in its new terms and conditions, stating that in one month’s time they’d have the right to use your photos for any advertising they want One problem: in most cases, it’s not just the consent of the uploader you need: you also need the consent of the photographer (who is not necessarily the uploader) and for adverts you really need the consent of the people in the photos too. So really the only practical legal way they could use this is to use people’s photos as personalised adverts directed at them. Not sure what they had in mind – maybe “If you liked these hills, you’ll love the hills in Bratislakislavia which you can now reach with cheap flights from us. Click Here.” Anyway, we’ll never find out what their plans were because a massive backlash forced them into a U-turn.

As I’ve said before, it is unfair to vilify a website simply for seeking new ways of getting commercial revenue. I can only think of one major website that runs itself entirely on voluntary donations (Wikipedia), and that is only possible through an unprecedented amount of good will, both from donors and volunteer contributors. The rest cannot run themselves for free. How far it’s morally acceptable to go is open to debate; some web users, for instance, argue that even the most non-intrusive advertising is bandwidth theft, whilst some less scrupulous advertisers would see no ethical issues in, say, putting an ad for a Wonga loan on a debt advice site. The moral debate, however, is a side-show: as is stands, there’s very few rules against intrusive advertising. They can do it, and they are.

But no matter how blasé you are about advertising ethics, there is one thing you ignore at your peril: how your customers react when you go too far. And this is where I think Facebook’s management of Instagram is a problem. Because Facebook has a record that it can get away with anything. It is one of the most heavily-criticised sites for is casual disregard to privacy. And yet every time Facebook makes a controversial change to its privacy policy, Facebook users usually react en masse by either joining a disapproving Facebook group or writing something disapproving in a status update. This is not a sweeping generalisation of all Facebook users being apathetic, but more an observation of how hard it is to vote with your feet. If you leave Facebook, you forfeit your network of Facebook friends. That’s not an issue for people like me who found the whole concept of Facebook friends utterly pointless, but I’m in the minority here. You Facebook friends won’t be waiting for you on Google+.

That safety net does not apply to Instagram. Migrating to another site is much easier: you just open a new account, upload all your photos, and close your Instagram account. No need to worry about how your friends will view your new site – search engines will pick it up in no time. But with Facebook so used to doing what it likes without consequence, it seems that complacency overruled common sense. And the rest is history. The outcry forced them to back down. Even this may be too late. Those who went through all the trouble of migrating to Flickr are unlikely to bother coming back. Facebook may well have changed Instagram into a $1bn waste of money.

What is most frustrating is that this was completely avoidable. There are plenty of ways of making money without alienating your users. Google Blogger provides AdSense on blogs on an opt-in basis, with a cut for both the blogger and Google, with enough left over to pay for the ad-free blogs. Wordpress funds its blogs through a series of optional paid extras, with again enough revenue left over to fund a free blog service. Surely there must be ways for a photo-sharing site to make money? How about, instead of using other people’s photos without permission, scour instagram for the best photos are offer a deal on selling as a stock photo? You get a cut, Instasgram gets a cut, have enough left over to run the site and the added bonus of an incentive to upload the best possible photos. Sadly, such is the damage to Instgram’s reputation we’ll probably never know if they could have done this.

Will Facebook learn lessons from this? I hope so. Will advertisers in general learn lessons from this? I suspect not. I suspect they’ve already moved on from Minority Report and they’re now getting inspiration from the “RESUME VIEWING” scene in Black Mirror. It’s the bit where a computer detects you’re looking away from an annoying advert, displays a message saying “RESUME VIEWING” and plays an earsplitting high-pitched noise until you give in and look at the screen again? Luckily, there currently isn’t the technology to create something like this in real life - wait a second, could you adapt a Kinect to do that?

Oh dear. I've got a bad feeling about this. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

What is going on with Google’s takedown requests?

Iggle Piggle: The new boss of the Pirate Bay?

I know I promised to take a break from Microsoft blog posts, but here’s a third one in a row. Not Windows 8 this time; it's about how Microsoft has got itself in the news for the wrong reasons. It’s been spotted that Microsoft has been sending automated copyright infringement notices to Google claiming that its copyrighted material is being infringed by sites such as, err … the BBC and its well-known hotbed of online piracy, CBeebies. The BBC was unaffected as it’s on a Google whitelist, but other sites weren’t so lucky, including perfectly reputable sites such as AMC Theatres and RealClearPolitics.

First of all, embarrassing though this is for Microsoft, it’s not fair to single them out. Their only crime is getting caught. The majority of copyright enforcement comes from the big film and record labels. As I’ve previously written, wanting to protect their material is reasonable, but their record of heavy-handedness isn’t. Although Microsoft has been criticised for collusion with the record companies, on this issue of dodgy automated takedown requests, I imagine the record companies are doing the same, if not more.

The root problem is that online piracy and copyright is a horribly complicated issue, and it doesn’t help that rules designed to be fair are being abused on both sides. I’ve looked at Google’s information on takedown requests and it seems fair and even-handed, so what’s going wrong? To consider this, let’s go back to the beginning.

First of all: something has to be done. I don’t want to go repeat my arguments, but the short answer is: i) films and music can’t be made for free, and ii) the pirates forfeited any moral high ground when they started raking in huge profits. But, as we all know, with the pirates swift to place material on foreign servers out of reach of the law, and to move on every time a takedown writ is issued, lawsuits alone doesn’t do the job. So attention turns (in part) to making it harder to find the stuff in the first place; the idea being that if it takes effort to pirate your new favourite single, you might decide that paying 99 whole pennies for a legal download might not be such a bad option after all. One obvious thing you can do is stop illegal downloads showing up in Google search results. No grounds for the pirates to complain – it’s not Google’s job to make life easier for them. So far, I have no objections. And then things start to get messy.

There are two snags: firstly, Google is an automated service covering gazillions of websites, and it is simply not practical for the staff at Google to fully investigate every claim. Secondly, a lot of copyright holders have been getting greedy and claiming copyright over things that aren’t theirs. It’s not just the film and record companies who are guilty of this; film studios have tried to get unfavourable reviews hidden, businesses have tried to get rival companies blocked from Google, employers have tried to get critical employees’ blogs delisted. Google claims to be refusing these requests, but these were easy because the copyright grounds were blatantly frivolous. One must wonder how many borderline cases are getting pushed through by the side with the bigger legal team.

But, this worry aside, Google makes a good attempt to come up with a fair copyright policy. If you want to claim copyright over some else’s web content, you have to back it up with an affirmation that this is true to the best of your knowledge. If you’re found to be lying, you might face criminal charges. Google, in turn, informs webmasters of alleged copyright infringements and give them a chance to state their case. Again, if you lie when making a counter-claim, you can also go to court. This seems fair enough – reporter or defendant, if you are in the right you shouldn’t mind making yourself answerable to the law. That ought to weed out most pirates without giving copyright claimants undue power – or so one would think.

In the last few months, things have suddenly changed. There has been an explosion of takedown requests, now seven times what it was in June. How has this happened? It’s not like there’s been a sudden surge in pirated material, so I can only assume it’s a surge in copyright claims. So presumably lots of companies, not just Microsoft, have started sending automated notices of copyright infringements to Google. To some extent, you can argue it is necessary to do this in order to keep you with pirates repeatedly re-uploading the same material. But as the recent debacle with Microsoft and CBeebies shows, these automated requests can get it badly wrong.

The current consensus seems to be that it’s all Microsoft’s fault and not Google’s, with Google apparently only doing what it legally had to do. I don’t quite agree. Google has to take its share of responsibility. It’s one thing taking the word of a human who stands to go to court if found to be lying; it’s another thing to take the word of a computer. Neither Microsoft nor any other company should have “computers errors” as an excuse for false copyright accusations with impunity, and it’s up to Google to put their foot down. If I was in charge of Google, I would think twice about accepting automated reports; at the very least, Google should only be allowing it if Microsoft and everyone else can demonstrate they’re taking steps to stop false positives. Few people would argue that Google search results alone is going to stop piracy – the most it can hope to achieve to persuade some casual pirates that legal downloads are easier – but it would be a stupid own goal if the moves to stop piracy is derailed by a faulty computer program. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

Lessons from the Narwhal

There is a lot at stake with the new user interface in Windows 8. Ubuntu’s experience from 2011 gives us clues for how this might work out.

Brace yourself Microsoft. It's your turn now.

With a new Windows version coming out, 8 is of course dominating the tech blogs. I haven’t looked much myself, but I’m assuming there’s gushing praise from Microsoft fanboys and scathing remarks from the hardcore Mac and Linux fans. I really have no appetite for a string of blog posts on one product myself, but having had a look at Windows 8, there’s now one extra thing that’s grabbed my attention other than the Windows Store, and that’s the Metro Interface (it’s now called the “Modern UI” due to a copyright row, but everyone’s still calling it Metro). I promise to move on to something else next time.

This new interface has grabbed a lot of attention, and not all of it’s good. Microsoft’s incentive is to make Windows 8 more friendly to tablet users where they desperately want to compete with Apple and Android, but they risk alienating their desktop customers. I have now tried out their interface and I can confirm it’s a right pain in the bum to operate with a mouse compared to the Start menu it replaced. I can see this being good for touchscreens, but there’s no sign touchscreens are going to replace keyboard, mouse and monitor in the office. Usability is a major issue for mass consumer software, and from the sound of some commentators you’d think this was Windows suicide.

Well, the good news for Microsoft is that there’s a favourable precedent here. Two years ago Canonical did something similar with Ubuntu 11.04 aka Natty Narwhal and its controversial Unity interface. There were a number of factors behind this decision, touchscreen-friendliness being just one of them, but there was a similar scornful reception from the Ubuntu faithful as there is from the Microsoft faithful now. I was just as sceptical about Unity then as I am about Metro now. In fact, after trying out Unity on my test partition, I decided to upgrade to Ubuntu 11.04 – but only once I knew how to force it back to the old interface.

And here’s the good news. I kept Unity on my laptop and netbook (Unity was partly designed as a space-optimised OS for small-screen netbooks), and after while started to understand where it was coming from. The Dash interface was a nightmare to use as a replacement to the Launcher (the Linux equivalent of the Start Menu), requiring numerous extra clicks to launch a program. But once you put all your key programs in the sidebar (which, realistically, is unlikely to be much more than the web browser, word processor and spreadsheet for most people), that’s not a big issue. I found the buttons at the side are a good way of keeping track of different windows belonging to the same program (very similar to the Windows 7 taskbar), and when used in conjunction with the new-look virtual desktops it becomes a powerful way of organising all your windows. There were a couple of feature I felt were more trouble than they were worth on desktops (overlay scrollbar and Global menu), but were easily disabled. When the next release came out six months later and the Unity interface had been refined a bit, I finally make the leap. And this was the experience of a lot of users.

And this is an important lesson in usability for Microsoft and everyone else: it can take months or even years to know if an interface change is a success. I’ve said previously that usability testing is hard because developers and testers, by definition, don’t know what it’s like to be a novice on a computer. One solution is to bring in non-technical people for usability tests, but this example shows a limitation: how can a few days or even a few weeks testing tell you what users think in six months’ time? We know from Ubuntu that it can take months or years for a change to gain acceptance from your users. Canonical and Microsoft both chose to the sceptics, then go ahead anyway and hope for the best. Canonical got away with for Unity, and that’s where there’s hope for Microsoft and Metro.

But it would be foolish to use Unity as proof that it’ll all be fine in the end. There’s a fine line between introducing unpopular changes that gain acceptance over time and imposing unpopular changes that stay unpopular. Facebook’s timeline looks set to be the latter. The Microsoft Office ribbon is at best a “Marmite change”, where you either love it or hate it. Unity wasn’t a complete success, because some users switched to Linux Mint (an Ubuntu fork that, amongst other things, stuck with the only Windows XP-like interface). In any case, there’s only so far you can go using Linux as a precedent for Microsoft. Linux users are a different demographic group to Windows users, generally more tech-savvy (so more likely to customise their favourite OS from the default setting but also more likely to switch distros if they get too annoyed), and generally different expectations. There’s no knowing if a change accepted by Linux users will also be accepted by Windows users.

If it was up to me, I would have made the new “Metro” interface the default for tablets and the old interface – including Start button – the default for laptops and desktops. Nothing particularly against this interface; just that Unity struck me as a good all-purpose balance between desktops, notebooks, netbooks and tablets, whilst the new Windows 8 screen strikes me as heavily optimised toward tablets. Or, at the very least, they should make it easy to switch back to the old interface with a few clicks. I know that maintaining two different interfaces is extra work (Linux users who stray from the default settings too much will find themselves running into bugs quickly), but – come on, it’s Windows, the highest-selling piece of software in the world, Microsoft can afford to do this.

But it’s unlikely this change of interface will be a Windows killer. Microsoft has many things to worry about – lack of Windows 8 app, a minor share in the smartphone market, open-source competitors getting better, the prospect of Android making the leap from tablet to desktop – but the Ubuntu experience shows that outrage over user experience tends to be a short-term thing. The real test will behow well Microsoft adapts to the changes to the IT market in the last decade – and it will take more than a change to the start page to make or break Windows. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

Cross-platform is the way to go

AMD will shortly be enabling Windows 8 users to run Android apps. I would advise Microsoft to welcome and support this.

Mr Ballmer, surely you won't deprive

your loyal customers of this?

Last year I wrote a blog article on “The Ghost of Vistas Past”, outlining how high important it was to Microsoft that Windows 8 is a success (along with the mistakes from Windows Vista that overshadows the reputation of all future releases). Well, we’re now approaching the release date and I’ve been looking at the pre-release version. Have to say, there have been a lot of Windows 8-bashing comments, but it’s hard to tell whether this is just the new tablet-optimised interface they’re getting used to or something more. At the moment, this could still be anything from a revolutionary ground-breaker to a Vista Mark II. But I’m going to make Microsoft a helpful suggestion regarding their controversial app store.

Firstly, an app store is a good idea. Linux distros were doing this years before there was the iPhone, when it was called “package management”. It’s good because instead of a mish-mash of programs from installation CDs or the internet, there’s a central database which takes care of all installation and updating. And as your computer keeps track of which packages installed which files, if you want to uninstall anything, you can do it properly, instead relying on unreliable uninstallation files that came with the program you don’t want. So far, so good.

The problem is that the range of official Windows 8 apps is reportedly very low. As late as last month (September), it was being reported there were only 2,000 apps, compared to 500,000 for Android. You can argue that it’s good to have a small number of apps that you know are high-quality and reliable, but that’s no good if you can’t find the app that does the job you want. There’s a debate around whether Microsoft is being too stringent accepting apps in the first place,[1] but the real obstacle is incentive to write these apps. There’s no getting round the fact that Apple and Android, having got to the smartphone and tablet markets first, dominate the market. Just like Linux suffered for years from lack of software when Windows dominated the desktop market – which Microsoft used against it – some might say that Microsoft is getting a taste of its own medicine in the smartphone and tablet market.

But help is at hand from AMD. As a result of a collaboration with Bluestacks, it will shortly be possible to run Android apps in Windows 8, on desktops, laptops, tablets, and possibly smartphones. Even if, as Microsoft hopes, their apps store is a bastion of high-quality apps, by adding the choice of Android apps you turn Windows 8 tablets into far more versatile devices. Can’t wait for the latest Angry Birds to be ported to Windows 8? No problem, just install the Android version and away you go.

And the response from Microsoft? Apparently nothing. Their strategy was to encourage developers to write more apps for Windows 8, so I assume this strategy is unchanged. I can’t understand the logic behind this. With Windows Phones still a niche product with no immediate prospect of growth, it makes no commercial sense to write an app for Windows instead of the big two. Even porting an app from one operating system to another is tricky. If neither app writers nor Microsoft are able to put in the work getting apps to run on Windows, one would have thought they’d have welcomed AMD doing the job for them.

And leaving AMD to do their own thing is far from a safe bet. We don’t yet know how reliable this will be. In theory, you can run Windows programs on Linux using wine, but this is such a nightmare to get working, many Linux users don’t bother and use the closest equivalent native Linux program instead. The problem is the masses of fiddly settings you need to tweak to get a Windows program to properly interface with all the Linux components such as sound, graphics, printers, internet, you name it. Crossover is reputedly better, but only because you pay people to do all this fiddly work for you. Even so, the range of programs certified to work using crossover is limited. How much work is AMD going to have to do to get 500,000 Android apps working in Windows? It will depend a lot on how clever their cross-platform component is, and only time will tell. But it would be an awful lot easier if Microsoft threw its weight behind this. They could integrate this into Windows 8, they have deep enough pockets to test and tweak all the apps they want, and I’m sure it would be a better job than AMD/Bluestacks going it alone.

I can only imagine the thing Microsoft stands to lose is pride, especially after years of telling their customers they’re best off sticking to Microsoft Windows, Microsoft Office, Microsoft Internet Explorer, Microsoft Exchange, and Microsoft Everything (or, only where Microsoft doesn’t have a program, something written for Microsoft Windows).[2] It’s one thing being behind in the smartphone/tablet market, but quite another thing to admit it by welcoming apps written for a rival. And it could raise questions. If Microsoft is depending on apps written for Linux-based Android to sell Windows 8, how can they justify refusing to port Microsoft Office to Linux? Serious question.

My advice to Microsoft is that, in the long run, they would be better off forgetting about Microsoft Everything and go back to competing in a cross-platform world. At the moment, Microsoft are hoping that as long as customers need Windows to run Word and Excel, they’ll buy Windows, but with Libreoffice catching up on everyday functions, and file compatibility improving, customers may soon question whether they need Word or Excel in the first place. But where Libreoffice won’t be going any time soon is the advanced features of MS Office. Crossover’s chief selling point is running MS Office on Linux, and many Linux users pay for this. Remove the complication of an emulator and you can expect demand to increase. For every risk posed to Microsoft for going cross-platform, there’s an opportunity.

Will Microsoft embrace multi-platform? So far, it’s hard to imagine them dropping their old models. In 2005 technology columnist Bill Thompson hypothesised a future where Microsoft re-dominates the IT market with its own version of Linux called Micrix. But he didn’t seriously expect Microsoft to remotely consider this route, and they didn’t. Seven years on, I still don’t expect anything this radical, but there is one crucial change: Apple is rapidly overtaking Microsoft as the all-controlling bad guy. Can Microsoft rediscover itself as the guardians of a free IT system. Relive the heyday of Windows 95, the OS that freed you do anything with your computer?

The daft thing is that Microsoft is slow to recognise its own cross-platform successes. The Microsoft Kinect, as well as being quite successful on the X-Box, is a very popular accessory for all sorts of other uses. But wasn’t until hackers took the matter into their own hands that Microsoft realised they were on to a winner. Could we see the same take-up for Android apps in Windows? Microsoft Office on Android tablets? The sooner Microsoft sees this as a good thing, the better.

[1] Of course, the most notoriously stringent app store is Apple’s. The crucial difference is that Apple, as the first entrant into the commercial app market, can get away with it. Few developers want to cut themselves out of Apple’s app market, however many hoops they have to jump through. With the smaller Windows Phone market, the same app developers might decide it’s not worth the hassle.

[2] Although, to be fair, this is still an improvement on Apple. At least with all things Microsoft you get a free choice of hardware. Under Apple’s ideal, you don’t even get a choice on that. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

Politics versus Plan B

There is no more important place to get IT projects right than central government. Unfortunately, internal politics encourages the opposite.

This is a visual metaphor, with little or no relevance to the actual article.

Who’d be a prime minister? On one day it’s all “We Love You Dave/Gordon/Tony”, then the moment you’re under 35% in the opinion polls it’s a catalogue of everything your government’s doing wrong. This year it’s been the granny tax, pastygate, the petrol non-strike, G4S and the West Coast rail franchise to name a few. All that’s missing is a good old IT shambles. After all, the last government kept us busy with the lost child benefit discs and the ill-fated NHS system. Well, for all you restless journalists itching for a story, I recommend you keep an eye on the upcoming Universal Credit benefit system.

In case you’re not following UK politics on an hourly basis, Universal credit is a plan to merge a number of key benefits such as jobseekers’ allowance and tax credits into a single system. Benefits is a controversial issue right now, but this is a software testing blog, and the issue of interest is that this is all dependent on a new IT system being developed. Now, before I go any further, I must stress I don’t know anything about how this project is going. For all I know, it could be all going swimmingly. But what if it isn’t? There doesn’t seem to be any kind of Plan B ready if the project goes behind schedule. And if this happens, it won’t be the first, because I worked on the last IT project where that happened.

Which IT project, I hear you ask? Well, please don’t be too harsh, it wasn’t my idea, they made me do it, but – I did software testing for ID cards. Yes, those ID cards. Remember them?

Now, I could give you a blow-by-blow account of ID cards testing, and at some point I probably will. However, the one overriding lesson I learnt from that project is the dangers of mixing politics and software testing, especially when it’s such a controversial scheme. And I’m not just talking about party politics, but also internal politics. The result of this, the thing that made the project such a nightmare, was the Identity and Passport Service committing itself to a deadline it couldn’t meet.

I don't know why IPS signed up to such an unrealistic deadline, but I can guess. When you have a project as politically controversial as ID cards, no government wants to show any sign of weakness; they say it’s going ahead and say when it’s going ahead (preferably far enough ahead of the next election to make it difficult for a future government to reverse it). Senior civil servants, meanwhile, are eager to demonstrate a “can do” attitude and make promises on when a project can be delivered. Once a date is set, any slippage is politically toxic for both. The press and opposition will see it is a U-turn and hammer the government. The civil servants who promised delivery on time get it in the neck for failing to deliver. Politically, it’s far better to stick to the date you set. Plan B? There is no plan B.

But whilst this might have been a prudent political decision, it was a terrible IT decision. For a system as complex as IT cards that you are making from scratch, there was no way of predicting when it will be ready. And, worse, I can only assume the people who set these deadlines didn’t properly understand IT projects, because the moment I saw the timescale intended for testing I could tell it wasn’t realistic. Inevitably, the project descended into what is affectionately known as a Death March. Slippages in programming were compensated with cuts in testing. With the delivery date set in stone, the only way to meet to deadline was to declare the system ready to go, when the reality was quite different.

Fast forward to 2012, and I fear that not everyone has learnt lessons. In fairness, when Iain Duncan-Smith was questioned by a Select Committee last month,[1] he did try to explain this process wasn’t an October 2013 “big bang” and it would instead be introduced in stages. But when a Downing Street spokesman was asked about whether there’s any possibility of the October 2013 start date could be allowed to slip, the response was simply, I quote, “It's on track to be implemented in that timetable.” Which, at the risk of bringing up the same analogy again, is like responding to the question on lifeboat capacity with “The Titanic is unsinkable.”

In IPS’s defence, when the beta-quality system ID cards system went live, they did at least have the sense to keep the flow of customers manageable. Rather than open the floodgates on day one with the first 100 enrolments, they took in a few at a time, allowed for lost time with the inevitable bugs, and only ramped up the intake as and when the system was stable enough to take it. I’m not sure whether such a safeguard will exist for universal credit. The ID cards system was a pilot system designed for, at the most, tens of thousands of records. Benefits records, however, run into millions. Are we looking at transferring, say, a million cases on the new system by December 2013, ready or not? Even if well-intentioned managers at the DWP are trying to learn lessons and keep the timescale sane, will they be leaned on by minsters or permanent secretaries to hurry up? At the moment, I can’t rule this out.

There is no greater enemy to software testing than politics, be it party politics, management politics or just plain office politics. If you want testers to do their jobs properly, you must be prepared for them to tell it as it is, even if it’s not what you want to hear. You won’t get this in a culture where everyone is expected to be “positive” (i.e. anyone who expresses concerns is ignored or told to shut up). The opposition and press would do well to keep their eye on the Universal Credit system and keep their laptops poised. The government and civil service would do well to realise that it always pays off to have a Plan B.

[1] Actually, I don’t think it should have been Iain Duncan-Smith answering IT queries at all. Government ministers can only be as accurate on technical matters as the information they’ve already been briefed on. I’d much rather that on important IT matters, Select Committees directly questioned the people responsible for the IT, rather than use the minister as a go-between. This, of course, relies on the IT people answering questions honestly, which won’t happen if they’re worried about speaking out of line with their department. It could require a big culture change if politicians ever get a chance of knowing what’s really going on in flagship government projects. var pkBaseURL = (("https:" == document.location.protocol) ? "https://chrisnevillesmith.me.uk/piwik_test/piwik/" : "http://chrisnevillesmith.me.uk/piwik_test/piwik/"); document.write(unescape("%3Cscript src='" + pkBaseURL + "piwik.js' type='text/javascript'%3E%3C/script%3E")); try { var piwikTracker = Piwik.getTracker(pkBaseURL + "piwik.php", 4); piwikTracker.trackPageView(); piwikTracker.enableLinkTracking(); } catch( err ) {} -

Where's H. G. Wells when you need him?

Is advertising really legalised lying? In cyberspace, it seems, the answer is still yes.

Okay, I’m back. Sorry about my long period of absence from this blog. Much as I enjoy a blog on software testing, actual software testing got in the way and I’ve been super-busy for the best part of two months. But this work has finally come to a close so I can now get back to this. And the thing that I’ve wanted to get off my chest for the last two months is a pet hate to many people: internet advertising. Yes, I can hear you all now going "Oh God, I hate those things".

Bad and wrong. But is this coming to YouTube?

I’ll start with an obvious defence: if we want an internet, we need ads. Some websites, such as this one, are done by people in their spare time (which can be sporadic, as this one has just shown), whilst others, such as BBC News, are funded by other means. But for many sites, somebody has to be paid to create the content, and the only source of revenue is from the website itself. Even ad-free sites can depend on adverts. This blog, for instance, has no adverts, and I want to keep it that way, but I’ll admit that Blogger would never have developed the blogging tools and hosted the blog for free without the cut Google gets from adverts on other blogs they host. There are some interesting suggestions for online micro-payments as an alternative to ads or subscriptions, but there is little interest in making this a reality. Like it or not, adverts are just as much a part of the internet as they are to ITV.

And the obvious complaint? Internet ads are an absolute pain in the backside. At least on ITV they leave you alone when you’re watching the programme. Web adverts, on the other hand, seem hell-bent on grabbing your attention when you’re trying to read something else. All too often they rely on big flashing boxes, garish animations, and the ad itself leaping out of the box and covering the rest of the page. As well is being immensely irritating, it also makes a lot of pages inaccessible to people with disabilties that would otherwise have been fine. It’s little wonder people are turning in droves to products like AdBlock Plus. [1]